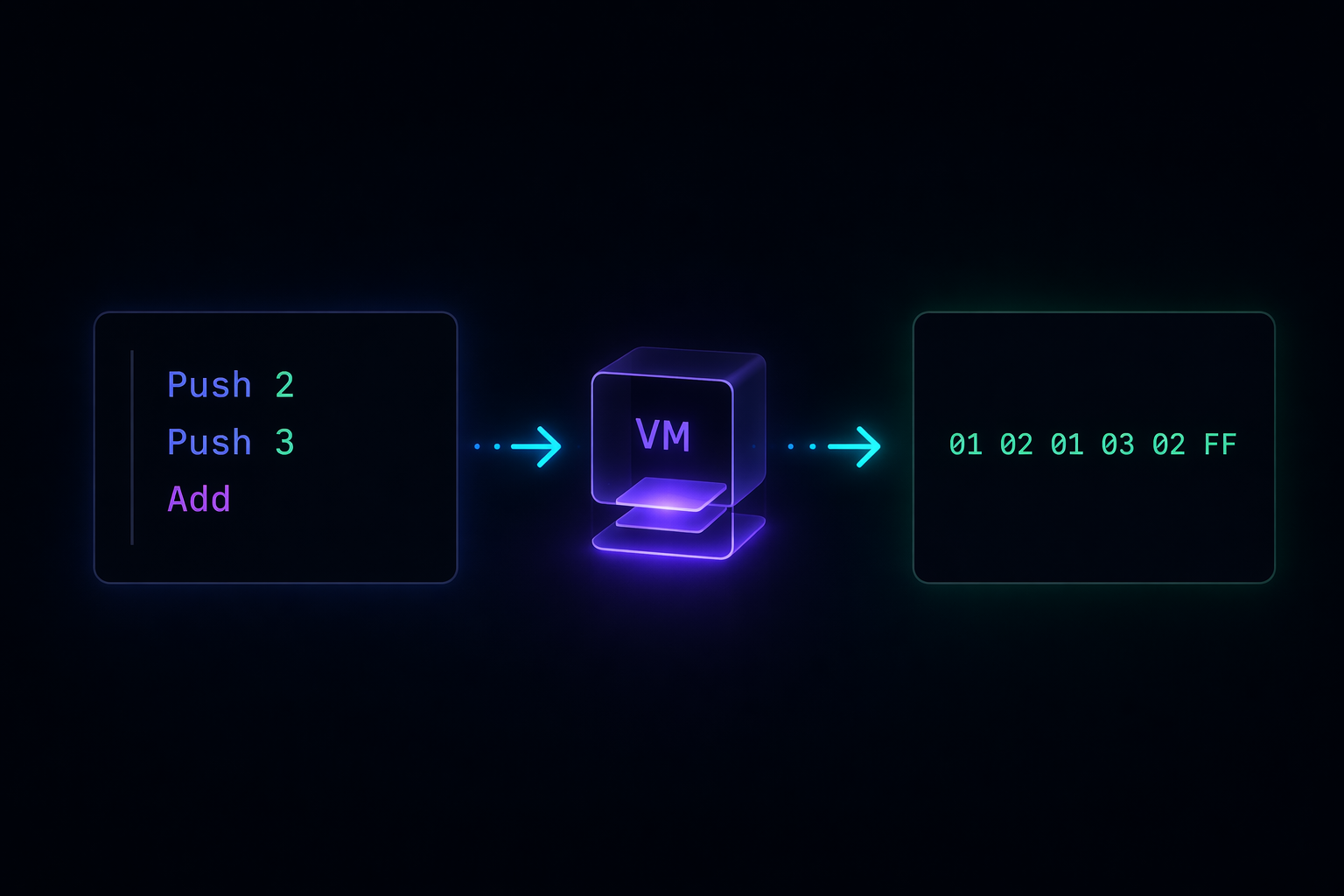

A small virtual machine built in three steps, from readable instructions to encoded bytecode, exploring how execution can be shaped, interpreted, and made harder to follow.

After spending some time trying to understand how systems like Denuvo work, one idea kept coming back to me. Execution is not always direct. At first, that sounds obvious because there are always layers involved, but this felt different. It was less about layers and more about control. So instead of going deeper into theory, I decided to build something small and follow how that idea evolves in practice.

Why a Virtual Machine

Before writing any code, it helps to think about what a virtual machine actually is at the conceptual level, because the term gets used in a few different ways and the differences matter.

When people say virtual machine in the context of systems like Java or Python, they usually mean a runtime that executes an intermediate representation. The source code gets compiled into bytecode, and then a separate program takes responsibility for running it. That execution can happen through interpretation, where instructions are handled one by one, or through just-in-time compilation, where parts of the bytecode are translated into native machine code at runtime. In both cases, the CPU is not executing your original source directly. It is executing code that is produced, transformed, or mediated by the runtime.

When people say virtual machine in the context of protection systems or obfuscators, they mean something slightly narrower and more deliberate. The point is not just to add a layer of execution. The point is to define a custom execution environment that is opaque to anyone who did not build it. The instruction set is private. The state is hidden. The mapping between what you see and what happens is intentionally obscured.

Both cases share the same core idea. There is a program, and there is something that runs the program. The CPU runs that something, not the program directly. That extra step is where control lives.

The mental model we start with

When we write code in a language like Rust, the mental model feels clean. You write logic, the compiler translates it into native code, and the CPU runs that result. You do not think much about what sits in between. The compiler is a tool, not a presence. It does its job and disappears.

A virtual machine breaks that assumption. Now the CPU is executing a runtime that defines how your logic behaves. That runtime may interpret instructions, transform them, or compile them further at runtime, but in all cases it controls execution.

That extra layer is where everything interesting happens. The runtime decides what each instruction means. It defines the structure of the state. It controls how execution moves from one step to the next. That is a lot of power concentrated in one place, and it becomes especially relevant when the goal is to make execution difficult to understand from the outside.

To make that concrete, I started with the simplest possible version: a small instruction set, readable names, predictable behavior, and a direct mapping between what you see and what happens.

Step 1: A transparent stack machine

The first version is a stack-based virtual machine. Stack machines are a natural starting point because the execution model is simple and the state is minimal. You push values onto a stack, you apply operations that consume and produce values on that stack, and you read results when you need them. There are no registers to track, no memory addressing to worry about, and no complex calling conventions.

enum Op {

Push(i32),

Add,

Sub,

Print,

Halt,

}

fn run(program: &[Op]) {

let mut pc = 0;

let mut stack = Vec::new();

loop {

match program.get(pc) {

Some(Op::Push(val)) => stack.push(*val),

Some(Op::Add) => {

let b = stack.pop().expect("stack underflow");

let a = stack.pop().expect("stack underflow");

stack.push(a + b);

}

Some(Op::Sub) => {

let b = stack.pop().expect("stack underflow");

let a = stack.pop().expect("stack underflow");

stack.push(a - b);

}

Some(Op::Print) => {

println!("{}", stack.last().unwrap());

}

Some(Op::Halt) | None => break,

}

pc += 1;

}

}

fn main() {

let program = vec![

Op::Push(2),

Op::Push(3),

Op::Add,

Op::Print,

Op::Halt,

];

run(&program);

}

The pc variable is the program counter. It tracks which instruction we are currently executing. Each iteration of the loop reads the instruction at pc, executes it, and advances. The stack is a growable list that serves as the working memory for the machine. When Add fires, it pops the top two values, sums them, and pushes the result back. Everything is visible and everything is predictable.

Running this program pushes 2, then pushes 3, adds them to get 5, prints 5, and halts. You could trace this on paper in thirty seconds. That is the point. At this level, everything is transparent. The instructions describe exactly what they do, and the execution loop reflects that directly.

This is useful for learning, but it also hides something important. The clarity here is not required by the system. It is a design choice. Nothing about a virtual machine forces instruction names to be descriptive, or forces the behavior of an operation to be pure, or forces the program representation to be human-readable. All of those things can be removed independently, and the machine keeps working exactly as before.

Step 2: Removing readability without removing capability

The next step is to remove that clarity without changing the underlying capability. Instead of descriptive instruction names, we reduce everything to symbols. The behavior stays the same in structure, but the readability disappears entirely. We also introduce something new: hidden state that participates in computation without being visible in the instruction stream.

#[derive(Clone)]

enum Op {

A(i32),

B,

C,

D,

X,

}

struct VM {

stack: Vec<i32>,

hidden: i32,

}

impl VM {

fn new() -> Self {

Self {

stack: Vec::new(),

hidden: 0,

}

}

fn run(&mut self, program: &[Op]) {

let mut pc = 0;

loop {

match program.get(pc) {

Some(Op::A(val)) => self.stack.push(*val),

Some(Op::B) => {

let b = self.stack.pop().expect("stack underflow");

let a = self.stack.pop().expect("stack underflow");

let result = a + b + self.hidden;

self.stack.push(result);

self.hidden = result % 2;

}

Some(Op::C) => {

let b = self.stack.pop().expect("stack underflow");

let a = self.stack.pop().expect("stack underflow");

self.stack.push(a - b);

}

Some(Op::D) => {

println!("{}", self.stack.last().unwrap());

}

Some(Op::X) | None => break,

}

pc += 1;

}

}

}

fn main() {

let program = vec![

Op::A(2),

Op::A(3),

Op::B,

Op::D,

Op::X,

];

let mut vm = VM::new();

vm.run(&program);

}

Several things changed here, and each one matters for a different reason.

The instruction names are now single letters. A pushes a value, B adds, C subtracts, D prints, X halts. If you encounter this code without context, the names give you nothing. You have to read the implementation of each arm to understand what it does. That shifts the burden from recognition to reconstruction.

The VM is now a struct with its own state. The hidden field is not part of the instruction stream. It is not something a caller sets. It evolves internally as the machine runs. The addition in Op::B is no longer pure: the result depends on hidden, and hidden changes after every addition based on the result. That means the same sequence of instructions can produce different results depending on what ran before it. The operation is no longer referentially transparent. You cannot understand it in isolation.

This is a meaningful shift. In the first version, you could understand any instruction by reading its case in the match block. In this version, understanding Op::B requires you to also know the current value of hidden, which requires you to trace every previous execution of Op::B. The history of execution becomes part of the state you need to reconstruct.

At this point, you can no longer rely on names to understand the system. The meaning of each instruction is defined only by how it is implemented, and the behavior of some instructions depends on accumulated state that is not visible anywhere in the program itself.

Step 3: Moving to bytes

The final step is to remove readability entirely and move into a byte-oriented representation. Instead of symbolic instructions, the program becomes a sequence of bytes. This is closer to how real systems operate, where the representation is not meant to be human-readable at all.

We also add one more layer: the opcodes are XOR-encoded. Before the interpreter can even decide what to do, it has to transform the byte it reads. The raw program does not contain the opcodes directly. It contains the opcodes masked with a key, and the key lives only inside the interpreter.

fn run(bytecode: &[u8]) {

let mut pc = 0;

let mut stack = Vec::new();

let mut hidden = 0;

loop {

let key = 0x5A;

let opcode = bytecode[pc] ^ key;

match opcode {

0x01 => {

let val = bytecode[pc + 1] as i32;

stack.push(val);

pc += 2;

continue;

}

0x02 => {

let b = stack.pop().expect("stack underflow");

let a = stack.pop().expect("stack underflow");

let result = a + b + hidden;

stack.push(result);

hidden = result % 2;

}

0x03 => {

let b = stack.pop().expect("stack underflow");

let a = stack.pop().expect("stack underflow");

stack.push(a - b);

}

0x04 => {

println!("{}", stack.last().unwrap());

}

0xFF => break,

_ => panic!("unknown opcode"),

}

pc += 1;

}

}

fn main() {

let raw = vec![

0x01 ^ 0x5A, 0x02,

0x01 ^ 0x5A, 0x03,

0x02 ^ 0x5A,

0x04 ^ 0x5A,

0xFF ^ 0x5A,

];

run(&raw);

}

There are a few things worth unpacking here.

The program is now a Vec<u8>, a sequence of raw bytes with no inherent structure. Looking at raw in main, you see values like 0x5B, 0x02, 0x58. Those numbers carry no meaning on their own. You need to know the key, and you need to know the decoding step, before any of it makes sense.

The decoding step is bytecode[pc] ^ key, where key is 0x5A. XOR is a simple and reversible operation: if you XOR a value with a key to encode it, you XOR again with the same key to decode it. Here the encoding happens when we construct raw in main, and the decoding happens at the top of each loop iteration. The interpreter always works with decoded opcodes internally, but what is stored externally is always encoded.

The instruction for pushing a value, opcode 0x01, now spans two bytes. The first byte is the opcode itself, and the second byte is the operand. After reading both, pc jumps forward by two instead of one. This is how variable-length encoding works, and it is one of the things that makes disassembly harder because you cannot simply treat every byte as an independent instruction.

The rest of the behavior carries over from the previous version. The hidden state participates in addition. The stack holds working values. The halt instruction ends the loop.

What the progression actually shows

Looking at all three versions together, the operations are still fundamentally the same. Push, add, subtract, print, halt. That has not changed. What changed is the relationship between what is visible and what is happening.

In the first version, the relationship is direct. The instruction set is the documentation. You read the code and you understand the behavior.

In the second version, the relationship is indirect. Names no longer carry meaning, and the behavior of some operations depends on state that accumulates invisibly over time. Understanding requires tracing, not reading.

In the third version, the relationship is encoded. The program is not in a form that can be read at all without knowing how to decode it, and the decoding step lives inside the interpreter. There are now two distinct views of the system: the external representation, which looks like arbitrary numbers, and the internal execution, which only becomes clear once you understand the full pipeline from raw bytes to decoded opcodes to stack operations.

The system did not grow significantly in size across these three steps. The core operations stayed the same. But the effort required to understand the program from the outside grew substantially at each stage.

Some directions this can go

This is a minimal foundation, and it is worth thinking about where the complexity actually comes from in real systems that use this kind of approach.

One direction is instruction set size. A larger and more irregular instruction set means more cases to analyze and more surface area for behavior that is hard to summarize. Real obfuscating VMs often have dozens or hundreds of opcodes, many of which overlap in behavior or differ only in subtle ways.

Another direction is the encoding layer. XOR with a fixed key is the simplest possible encoding. In practice, the key can vary per instruction, per block, or per execution. The transform itself can be more complex than XOR. The bytecode can be split across multiple regions with different encoding rules applied to each.

A third direction is state complexity. The hidden field in our machine is a single integer that updates in a simple way. Real implementations can carry significantly more internal state, use that state in more operations, and update it through more complex functions. The more state participates in computation, the harder it becomes to reason about any individual operation without understanding the full history of execution.

A fourth direction is self-modification. The bytecode itself can change during execution. An instruction can rewrite other instructions. The program you started with is not the program that runs by the end. This breaks a lot of standard analysis techniques that assume the program is static.

None of these directions require fundamentally new ideas. They are all extensions of what is already present in the third version: separation between representation and execution, hidden state that shapes behavior, and encoding that requires context to interpret.

The shift that matters here is not technical in a narrow sense. It is conceptual. Once you build even a small virtual machine, the assumption that execution is direct and transparent starts to feel less natural. Execution is a process that can be shaped. The interpreter defines the rules. The representation can be made as opaque or as clear as the designer chooses. The CPU only ever sees the interpreter.

That is the part that changes how you think about analysis and protection and the relationship between code and behavior. It is not that execution becomes mysterious. It is that it becomes something that can be deliberately designed to resist being followed, and the tools for doing that are not complicated. A few layers of indirection, some hidden state, and a simple encoding step are enough to break the direct connection between what you see and what actually runs.

To make this more concrete, I implemented all three versions to follow this progression in practice.

If you want to explore it yourself, the code is here