An exploration of Denuvo’s architecture from a low-level perspective, focusing on code virtualization, execution flow, and environment-based validation.

Lately, as I’ve been going deeper into low-level programming and reverse engineering, I ended up stumbling into something I had heard about for years but never really tried to understand properly: Denuvo.

Like most people, I only knew it by reputation. Hard to crack, controversial, sometimes blamed for performance issues. But at some point I stopped caring about the noise around it and started asking a different question.

What is it actually doing under the hood?

The more I looked into it, the more I realized that Denuvo is not really about blocking access. It is about making the program hard to understand in the first place.

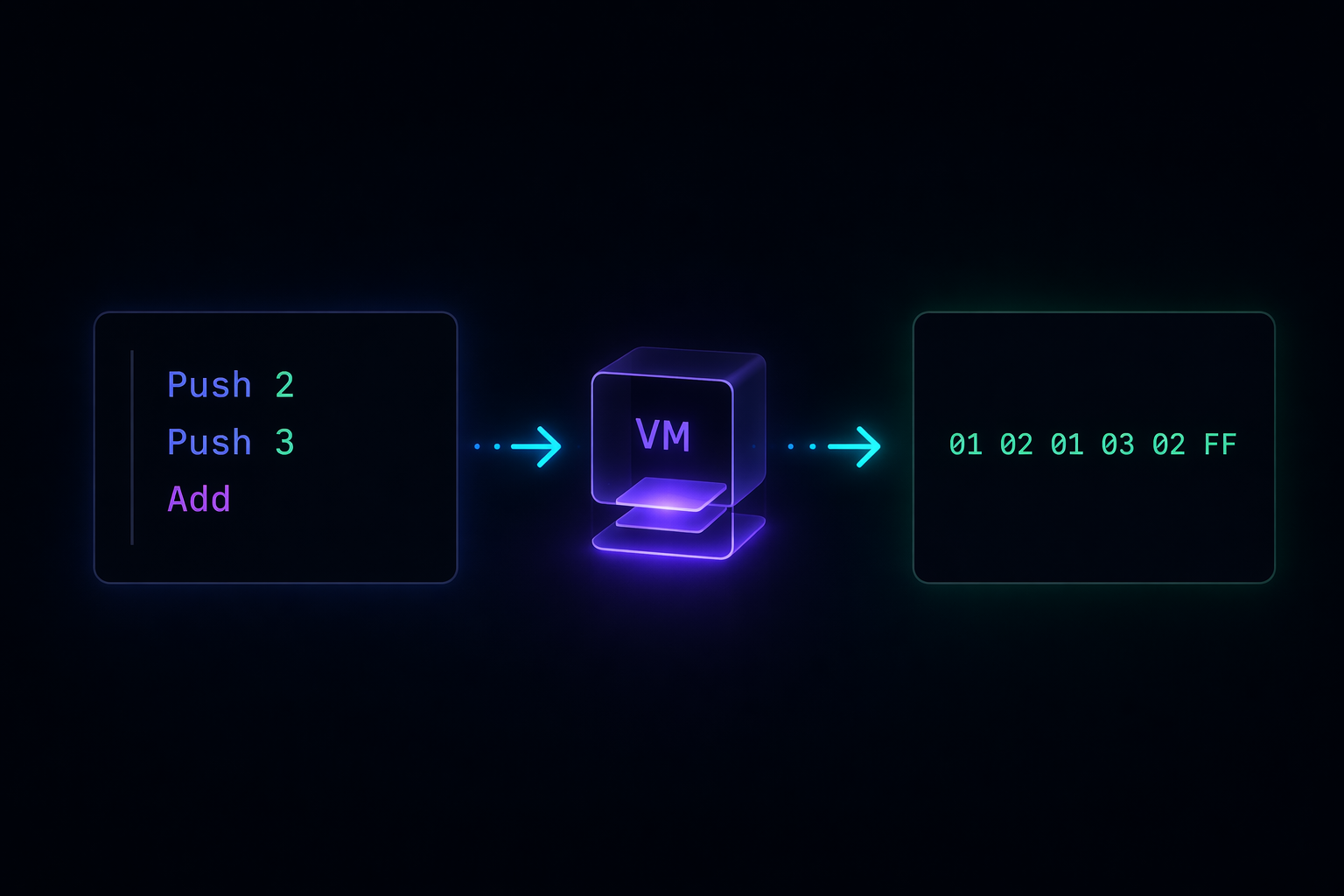

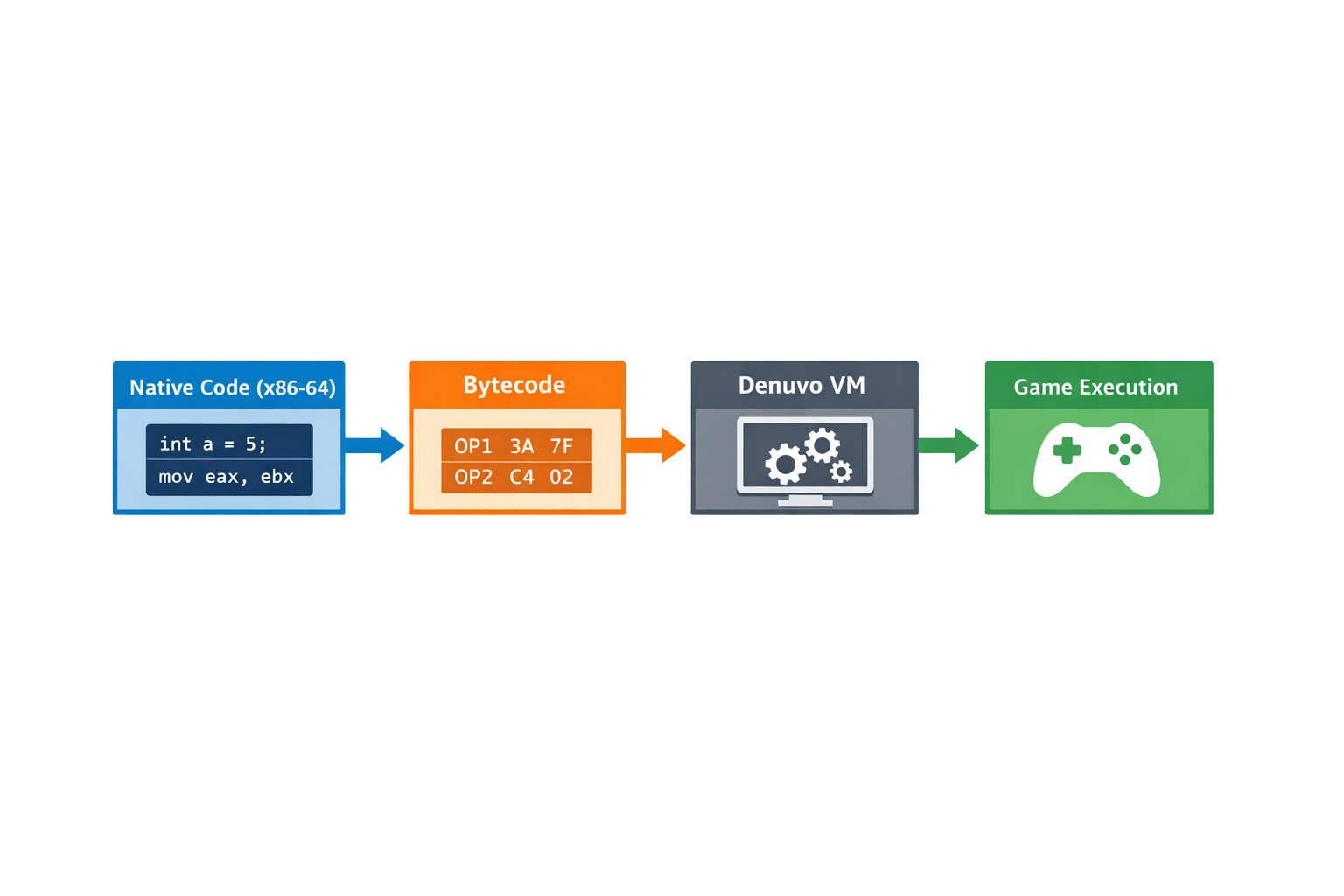

One of the ideas that stood out to me early on was code virtualization.

Instead of letting the CPU execute certain parts of the program directly, those parts are transformed into something else entirely. A custom bytecode that gets interpreted at runtime.

So when you open the binary expecting to see logic, what you actually see is an interpreter and a set of instructions that only make sense inside it.

So when you open the binary expecting to see logic, what you actually see is an interpreter and a set of instructions that only make sense inside it.

That shift alone changes everything. You are no longer reading code. You are trying to understand a machine that is running code.

And it gets even more interesting when you realize that this “machine” is not fixed. It can change between implementations. So even if you understand one case, that knowledge does not necessarily transfer cleanly to another.

Another thing that really changed how I think about it is the absence of a single point of failure.

I used to imagine something like a central check. If you find it, you’re done. But that’s not how it works. The checks are spread across the entire execution. Some happen early, others much later, sometimes in places that do not look important at all.

It feels less like a lock and more like a system that constantly verifies itself.

Then there is the environment.

The program is not just running. It is observing.Timing, execution patterns, system characteristics. It is constantly trying to answer a simple question:

Does this look like a normal machine running normally?

If the answer is no, things start to break. Sometimes loudly, sometimes in ways that are hard to trace back.

And then there is integrity. Parts of the program checking other parts. Small changes can cascade into problems much later. Which makes naive approaches unreliable.

At some point, while reading through an independent technical analysis of a modern bypass, something clicked for me.

The interesting part was not the techniques themselves, but the mindset behind them. Instead of trying to remove all of this complexity, the approach was to work around it. To make the environment look exactly how the system expects it to look, so that everything just… passes.

That idea stuck with me.

Because it highlights something deeper. Systems like this are not just protecting code. They are defining expectations about how that code should behave in the world.

And if you can control those expectations, you can change the outcome.

What I find most valuable in all of this is not the specifics of any implementation, but the perspective it gives.

It forces you to think beyond functions and files. You start thinking in terms of execution, environment, and interaction with the system as a whole.